Table of contents

- Part 1

Prepare to Train a Deep Learning Model

- Part 2

Train Simple Deep Learning Models

- Part 3

Train Advanced Deep Learning Models

Table of contents

- Part 1

Prepare to Train a Deep Learning Model

- Part 2

Train Simple Deep Learning Models

- Part 3

Train Advanced Deep Learning Models

Understand Deep Learning

Hello, and welcome to this course! My name is Radu, a data scientist by trade. I am very excited to teach you about deep learning! When I first switched from a career in software engineering to a career in data science, I had to learn many things from scratch, and let me tell you, some things were a lot easier to learn than others! My goal in this course is teach you about deep learning in the way I would have liked to learned it back then! So, let's first begin by understanding what deep learning is and how it’s applied in the real world. ^^

Hello, and welcome to this course! My name is Radu, a data scientist by trade. I am very excited to teach you about deep learning! When I first switched from a career in software engineering to a career in data science, I had to learn many things from scratch, and let me tell you, some things were a lot easier to learn than others! My goal in this course is teach you about deep learning in the way I would have liked to learned it back then! So, let's first begin by understanding what deep learning is and how it’s applied in the real world. ^^

Identify Differences Between Deep Learning and Classic Machine Learning

What Is Deep Learning?

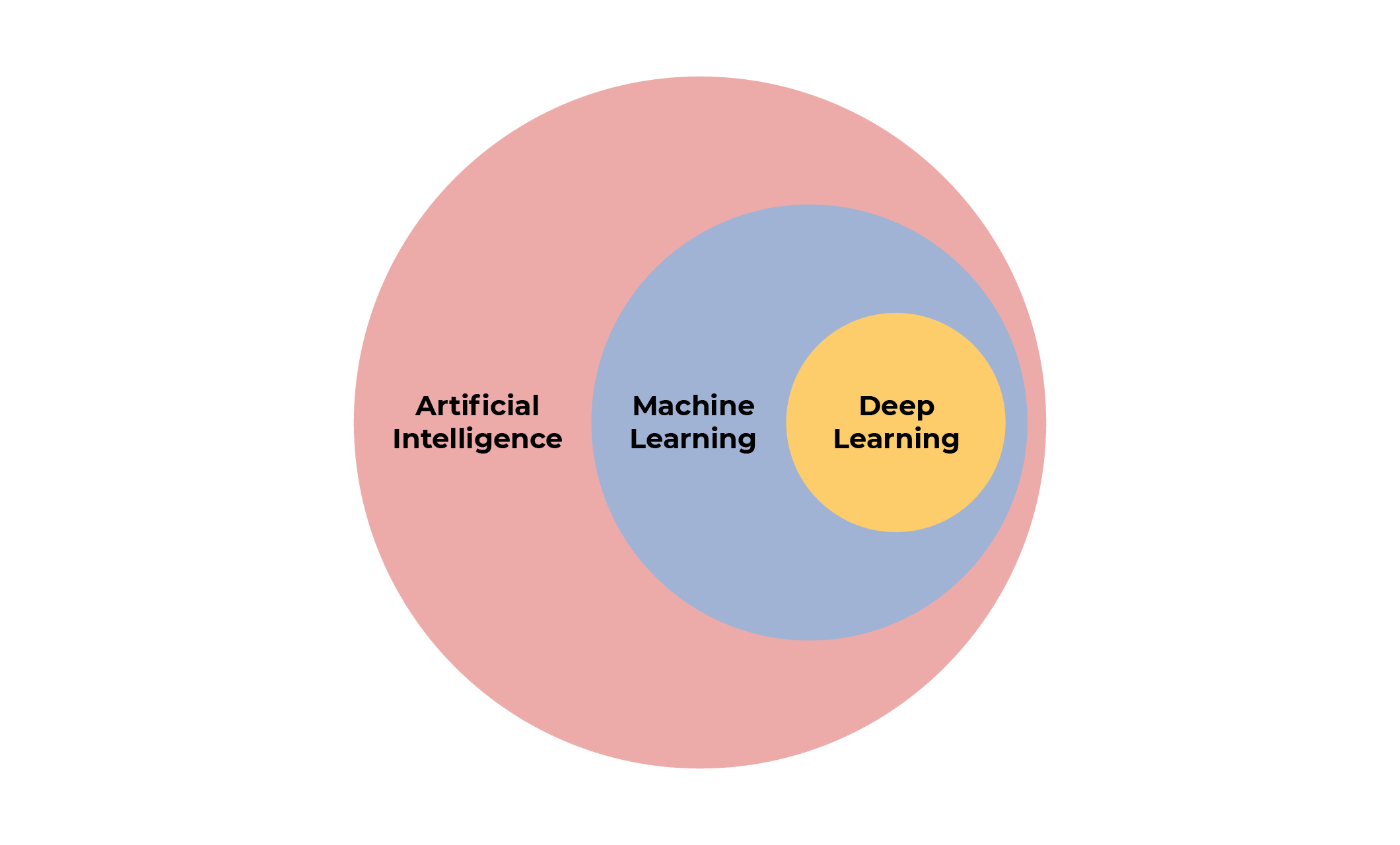

Deep learning is a subset of machine learning, which is part of the broader field of artificial intelligence. It's a bit like Russian dolls when you think about it! If it still isn't clear for you, check out the diagram below to see how they fit together.

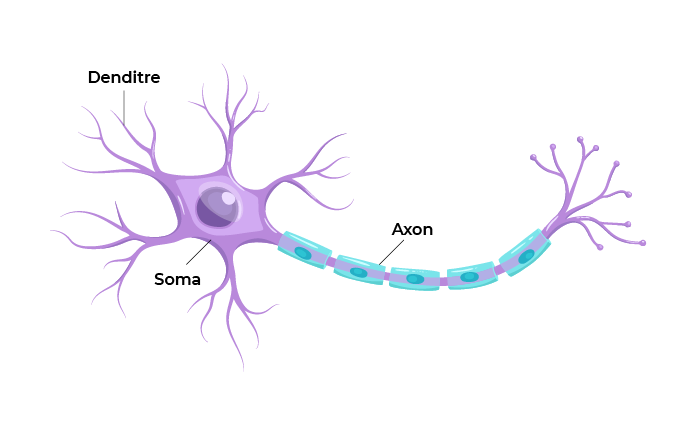

Most neurons in the body receive an input signal via parts called dendrites. The message gets processed in the soma (the body of the neuron), and once the cell reaches a certain voltage threshold, an impulse is fired down the axon. This is the basis of neural communication. Once the impulse reaches the end of the axon, it passes to a neighboring neuron via a synapse.

Artificial neurons work similarly in that they receive input, process it, and then produce an output. They are usually built as parallel units in a layer. The function of the synapse is replicated by connecting those layers.

Let’s delve deeper!

What Differentiates Deep Learning From Other Machine Learning Methods?

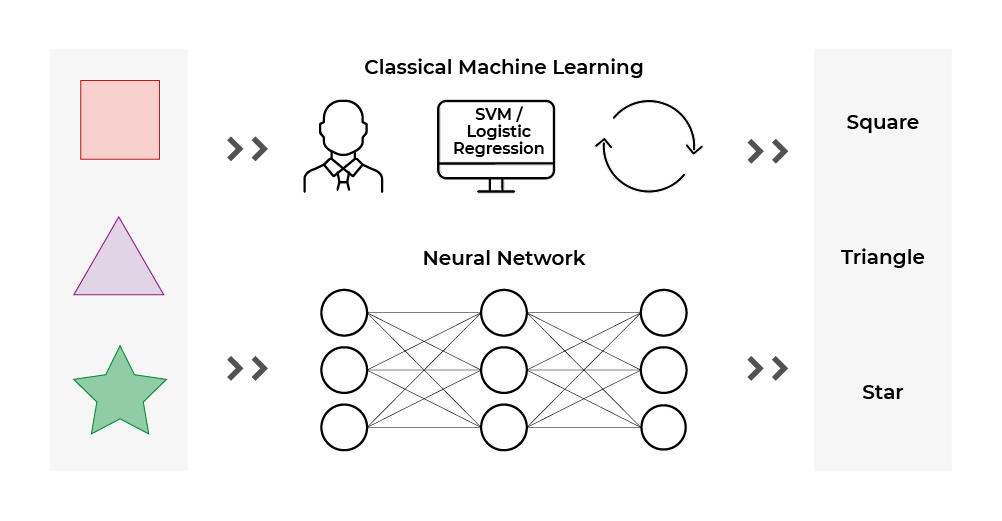

To better understand how deep learning is different from other machine learning techniques, consider this example: you have to build a shape detector to distinguish between squares, triangles, and stars.

With traditional machine learning, you would find yourself making a lot of manual feature selection and manipulation - also known as feature engineering (for a refresher about feature engineering, check out the course called Train a Supervised Machine Learning Model). You would have to actively think about the precise characteristics that define these shapes and how to extract that information into features that a machine learning algorithm could understand. For example:

You could build a color to black and white converter. It would make it easier to spot the differences in shapes and understand how they are built (lines, corners).

You could build a corner detector. Each shape has a different number of corners: Stars have five corners, triangles have three, squares have four.

You could build a line detector. Every shape is built out of lines. Stars have ten lines, triangles have three, squares have four.

A little tedious, though, right? o_O

Why Is Deep Learning so Important Now?

Do you have the feeling that deep learning is all over the internet these days? Does it seem like a new field was just born? Well, let me tell you about its history, you’ll be surprised!

The idea of neurons and neural networks has been around for quite some time. Artificial neurons were first proposed in 1940s by neurophysiologist Warren McCulloch and mathematician Walter Pitts. The research went on for some years, and around 1986, David Rumelhart and other researchers proposed the idea of backpropagation - the technique that sits at the basis of neuronal learning (we will see this later).

At the time, however, computers proved very slow at doing the number of mathematical operations required to train the networks, and data was not as easy to come across as today. Therefore, many researchers turned away from this subfield, focusing on other machine learning techniques.

Fast forward to today, and a lot of these drawbacks have been overcome:

There is now more processing power – which enables neurons to be trained faster. In the early 2000s, Ian Buck and a team of researchers found that they could use graphical processors, purposefully built to do fast mathematical operations, to train neural networks faster. It was an early iteration of NVIDIA CUDA - one of the first programming libraries that helped run neural networks on graphical processing units (GPUs). As time went by, other companies also started their own versions, and nowadays, entire clusters of GPUs are commonly used to train machine learning systems.

Data is available in huge amounts – which enable the neurons to spot intricate patterns. Humans love to share everything we are doing, and we produce more data every day. In 2019, a report by Forbes, found here, tried to put a number on how much data we produce every minute.

Powerful computers, together with large datasets, are now allowing us to build vast neural networks and obtain extremely powerful AI systems such as translators, automated driving, and more.

How Are Neural Networks Built?

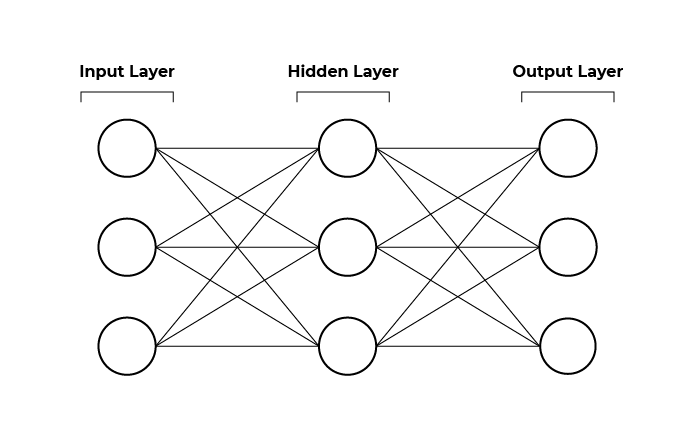

To build the networks, you first stack multiple neurons, one on top of each other in what is known as a layer. You make several of these layers and then connect them. The first layer of your neural network is your input layer and the last one the output layer. Deep learning derives its name from the fact that these networks have many middle, or as they are commonly known, hidden layers.

There are multiple ways to connect the layers for neural networks, and certain architectures have proven useful for some applications. Here are the ones you’ll learn about in this course:

Fully connected neural networks - where all neurons in a layer are connected to all other neurons in the next. This one is probably among the most common.

Convolutional neural networks – widespread for image processing, this network builds filters through which data is processed.

Recurrent neural networks – quite common with time series data (such as sensor data). This network combines past information with current or present data to make decisions.

We will get back to these later in the course.

See Examples of Deep Learning in Action

Where is deep learning used?

Translating

Deep learning has become extremely prevalent. We are surrounded! :zorro:

Whenever you use Google Translate, you use a network built using sub-network architectures called transformers and trained on a large amount of text in different languages that came from books or different companies that publish their data in multiple languages. Check out this video, published by Google, that explains their technology!

Driving

Drive.ai, Waymo, Uber, and Tesla’s self-driving cars use deep learning. Camera and sensor data go through different network architectures to be able to drive in different road conditions and react in different situations. Check out this video about self-driving car technology!

Learning by Doing

DeepMind is researching how deep reinforcement learning - an AI system that makes many complex decisions before it finds out whether it was right or wrong – can be used. For example, AlphaGo is the first computer program to beat world champion Lee Sedol at the ancient game Go. It trained itself by testing many different strategies and finding out, only at the end, when it won or lost, if those strategies were useful!

Getting Answers From a Vocal Assistant

Amazon uses deep learning to help Alexa convert our speech into text.

Cashier-Free Shopping

Alexa is probably not the only project you’ve heard about. Amazon now uses deep learning and computer vision to allow people to walk into a shop, grab what they need, and automatically pay for it. This video explains how it's done!

Let’s Recap!

Neural networks are a subset of all machine learning techniques. They are powerful in situations where there is a lot of data as they can learn without the need for a lot of manual feature engineering.

Neural networks are built out of layers of neurons. The first layer is the input layer, the middle layers are known as the hidden layers, and the last layer is the output layer.

There are three major and well-known types: fully connected neural networks, convolutional neural networks, and recurrent neural networks, but more architectures are coming every day.

Learning a new skill or technique is one thing, but explaining how your newfound knowledge applies to the real world is the key to success. To do so, you are going to learn through a real-world scenario. In the next chapter, you will become familiar with the project requirements for a few different deliverables at Worldwide Pizza Co. This mission represents a very likely request for an AI engineer. Throughout the course, you will be applying what you learn to complete these deliverables, and doing so will prepare you for work in the real world.

Ever considered an OpenClassrooms diploma?

- Up to 100% of your training program funded

- Flexible start date

- Career-focused projects

- Individual mentoring

Find the training program and funding option that suits you best