Table of contents

- Part 1

Construct a Definition of Artificial Intelligence

- Part 2

Discover the Societal Impact of Artificial Intelligence

- 1

Discover the Opportunities Offered by Artificial Intelligence

- 2

Identify Artificial Intelligence Safety Concerns

- 3

Identify the Challenges in Creating Responsible and Trustworthy AI

- 4

Assess the Impact of Artificial Intelligence in the Workplace

Quiz: Discover the Societal Impact of Artificial Intelligence

- Part 3

Describe the Inner Workings of an Artificial Intelligence Project

- Part 4

You've Reached the End!

Table of contents

- Part 1

Construct a Definition of Artificial Intelligence

- Part 2

Discover the Societal Impact of Artificial Intelligence

- 1

Discover the Opportunities Offered by Artificial Intelligence

- 2

Identify Artificial Intelligence Safety Concerns

- 3

Identify the Challenges in Creating Responsible and Trustworthy AI

- 4

Assess the Impact of Artificial Intelligence in the Workplace

Quiz: Discover the Societal Impact of Artificial Intelligence

- Part 3

Describe the Inner Workings of an Artificial Intelligence Project

- Part 4

You've Reached the End!

Understand General-Purpose AI Models

These AI models are complex and costly to develop. They are sometimes known as foundation models because they can be reused and adapted downstream by different users and applied to other use cases. Users can provide new learning data to a preexisting AI model to optimize performance in a technique known as fine-tuning. A bit like a supplier who sells its shampoo to a cosmetics company, which then applies its own label to target a specific customer base.

Narrow AI performs very well on singular, precise tasks but cannot perform other tasks that may seem straightforward. For a long time, we could only develop narrow AI systems. The AI system that defeated the world’s best chess player in 1990 could not beat humans at other games and was even less useful at performing completely different tasks, such as answering questions or manipulating objects.

However, general AI (AGI) can carry out a wide range of tasks just as well as—and sometimes even better than—a human. Examples include conversing, planning tasks, programming machines, and manipulating objects. Over the last few years, cutting-edge AI laboratories have developed increasingly generic AI systems. Natural Language Processing AI systems, such as OpenAI’s chatbot, ChatGPT, can now ace standard math tests and med school exams, code websites, and write job application letters. Although AI experts currently disagree about whether general AI could become as intelligent as humans in all subject areas, it’s clear that AI systems can already perform many tasks better than humans.

Discover an Example of General-Purpose AI: Generative AI

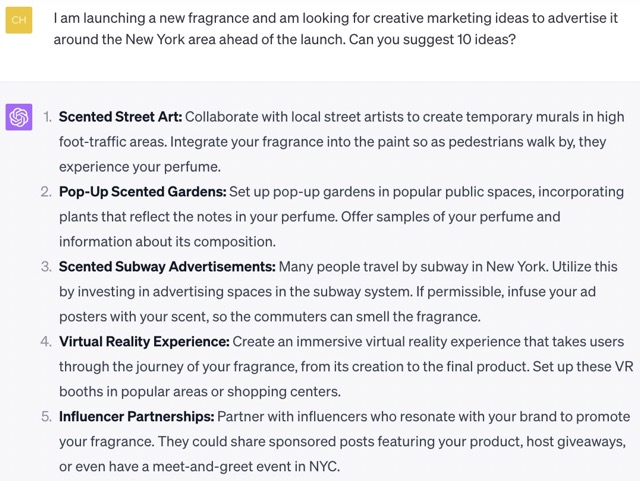

Some of today’s AI systems can perform various content-creation tasks. These are called generative AI models along the lines of ChatGPT, which allows a human to communicate with a machine.

ChatGPT is the best-known example of generative AI, but it’s by no means the only one.

There are several different types of generative AI:

Text generators, known as “language models.” This applies to ChatGPT, LLaMa by Meta, Ernie by Baidu, and Google’s Bard. Their role is to generate meaningful text. They can complete a phrase, converse with a human, or modify an existing text by following prompts in their chosen language (English, French, Spanish, etc.). They can also write code using programming languages.

Image generators can create a new image based on a simple text description. Users can request anything from a realistic, photo-like image to an artistic, conceptual design. Examples of image generators include DALL-E (by OpenAI, the creators of ChatGPT), Midjourney, and Stable Diffusion.

Here are some examples of images generated by Midjourney.

The most disturbing fact is that these people don’t exist—AI has completely fabricated them. You probably guessed that for the koala playing the guitar!

Audio content generators, where voice and music audio can be generated based on text (also known as “text-to-speech”). While some AI systems are still in their infancy, the results are promising and evolving fast. Examples include Elevenlabs, Coqui.ai, and OpenAI Jukebox.

Video generators—such as Runway, Synthesia.io and D-ID—are recent developments and growing exponentially. Creating a video based on simple text instructions is now becoming a reality. One day, we could even create films on demand!

AI systems that generate different types of content—text, images, video, sound, etc.—are multimodal generative AI models.

Some of these AI systems are open source, meaning the AI code is available to everyone—particularly developers who want to copy it. Others are operated using an API, which is a tool that accesses the AI model on behalf of the user without them having direct access. The sheer abundance of solutions can make far-reaching innovations much easier.

Learn How Generative AI Works

Let’s focus on the most famous example of them all: ChatGPT.

Still trying to understand what that means? Let’s take a closer look:

Generative simply means we’re talking about a generative AI model that aims to generate content —i.e., text, images, video, or all of these things simultaneously if it’s multimodal generative AI.

Pre-trained means that the AI system has already received some training. It has been given millions of books, websites, and Wikipedia pages to read. This training gives it an understanding of the world and the connection between different words (or other forms of content). The knowledge it acquires goes up to a certain point in time (the date of its last training session).

Transformer is the name of the algorithm that GPT is based on. It was invented by Google researchers and published in a famous paper called Attention Is All You Need. This paper revolutionized the AI world because it meant that a computer could focus on the most relevant information quickly. It also processed many tasks in parallel, making the best use of computing power to perform its tasks.

ChatGPT is a conversational tool based on text-generating AI trained by being fed a whole raft of books and websites to read, and it works using the Transformer algorithm originally designed by Google researchers. Wow!

Okay, but what exactly does ChatGPT do?

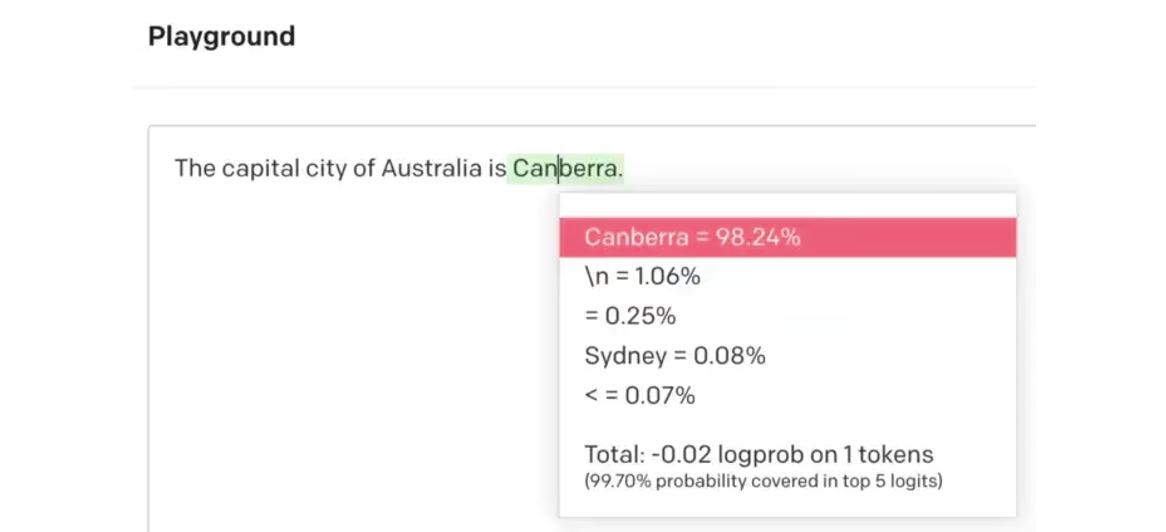

The fundamental principle of GPT is to guess the next word relevant to the text. If you give GPT a text, it will do its best to complete it by following your instructions.

Let’s take an example:

“The capital city of Australia is...”

The correct answer is Canberra, and, as you’ll see, this was the most probable answer in the above example, rated at 98.24%. However, GPT (and, by extension, ChatGPT) has no concept of truth. All it knows is that “Canberra” is the word most likely to follow this text. As you can see, it’s almost tempting to say Sydney (the wrong answer), probably because many people make this mistake in the texts it reads for training.

In the same way, if you ask it a question, it will do its level best to give you a plausible response, but it won’t necessarily be correct!

Consider the Safety Issues of General-Purpose AI

ChatGPT goes further than just using the GPT model. The ChatGPT development teams at OpenAI have several other strategies to make it more coherent and prevent it from providing potentially dangerous information, including answering awful questions such as “What is the best way to take your own life?”

This is a technique that attempts to provide AI with user preferences. Remember the chapter on AI safety and take a little time to consider the following:

Does this technique resolve all the difficulties in specifying our preferences to the AI system?

Who gets to provide human preferences to the AI system based on which criteria?

How can we indicate all undesirable behavior to the AI system?

Are we capable of defining all of AI’s potentially undesirable behavior?

These developments raise several questions that were previously the stuff of science fiction.

It’s becoming challenging to distinguish an image or text generated by AI from something a human produces. This major paradigm shift raises questions around disinformation, bias, impact on jobs, and the balance of power, as we’ve seen in previous chapters. Generative AI has made it frighteningly easy to create fake images and texts. AI also invents information that appears plausible but is fake (such as the capital of Australia!). We must always be ready to apply our critical thinking skills!

These risks and concerns are shared by many scientists and also by the businesses that create AI systems, such as OpenAI. Some call for pausing AI development, which seems unlikely, whereas others demand increased regulation.

Understand Technical Advances: Narrow AI is Becoming Increasingly General

Generative AI is interesting because it started as “narrow AI” but began acquiring new, unanticipated features.

In text generation, ChatGPT started with the simple task of completing a piece of text (i.e., finishing a sentence). Without being specifically coded to do so, ChatGPT is now capable of:

rewriting a text in a different tone of voice.

summarizing a text.

solving mathematical problems.

correcting spelling and grammar mistakes.

translating a text into any language.

brainstorming ideas.

analyzing feelings in a text.

creating a reasoned argument for a problem (ask ChatGPT about the risks and advantages of using an AI system like ChatGPT, and you’ll get some exciting results!).

With image processors like DALL-E, we started asking them to generate new images. This is a fascinating and attractive option as it is, but new features have emerged through using it:

Creating variations on an existing image

Enlarging images and creating areas around the image

Upscaling images infinitely to improve definition and provide more precision (although TV police and spy dramas have been doing this for years!)

Removing the image’s background

Replacing objects in an existing image

Realistically colorizing black and white images

Creating animations from a simple, static image

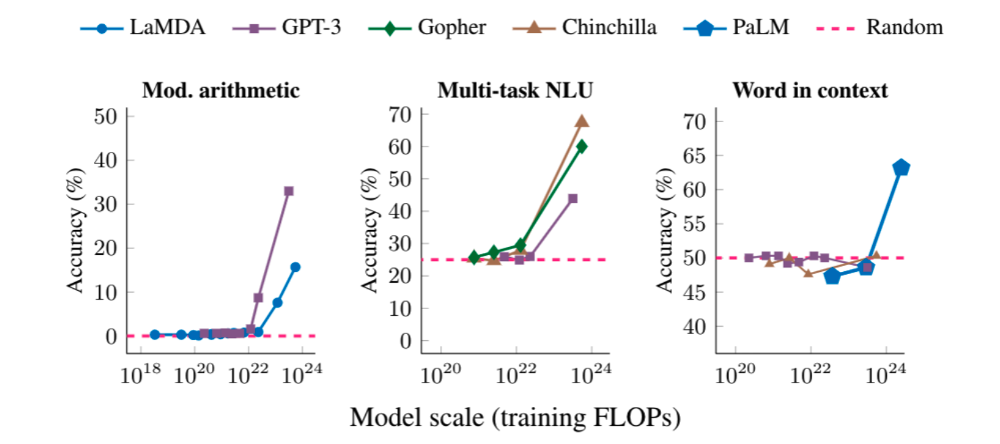

By feeding an AI model (giving it more data to read and more capacity to analyze the data using increased computing power), the model becomes effective at performing new tasks that it was previously unable to carry out, as we can see from the following graphics from Google Research:

Once it reaches a certain size, the AI model can suddenly process complex calculations, handle multitasking questions and understand the context around a particular word.

Once it reaches a certain size, the AI model can suddenly process complex calculations, handle multitasking questions and understand the context around a particular word.

The development of these technologies is advancing at an astonishing rate. It might turn out that model capacities will plateau and limit new features for several years. It might even turn out that AI will contribute to increasing the pace of its technical advancement. We only know that AI is still surprising us with what it's capable of.

However quickly the technology advances, large-scale development of general-purpose AI systems that can carry out an ever-increasing number of tasks raises many fundamental questions that concern society. Now you understand how they work, take some time to think about it!

Let’s Recap!

General-purpose AI models, also known as “foundation” models, can perform many different tasks, unlike narrow AI, which performs specific tasks. A single general AI model can carry out numerous tasks just as well as a human, if not better.

Generative AI can handle many tasks relating to generating content, such as text, images, videos, sound, etc.

ChatGPT is a well-known example of generative AI. This text-generating AI system is pre-trained by being made to read many books and websites. This conversational tool was based on predicting the next word to suit a text, but it can’t distinguish what is true.

You've almost reached the end of this course. It's time for a last quiz to test your knowledge. Let's go!

Ever considered an OpenClassrooms diploma?

- Up to 100% of your training program funded

- Flexible start date

- Career-focused projects

- Individual mentoring

Find the training program and funding option that suits you best